Sonification: turning the yield curve into music

Roula Khalaf, Editor of the FT, selects her favourite stories in this weekly newsletter.

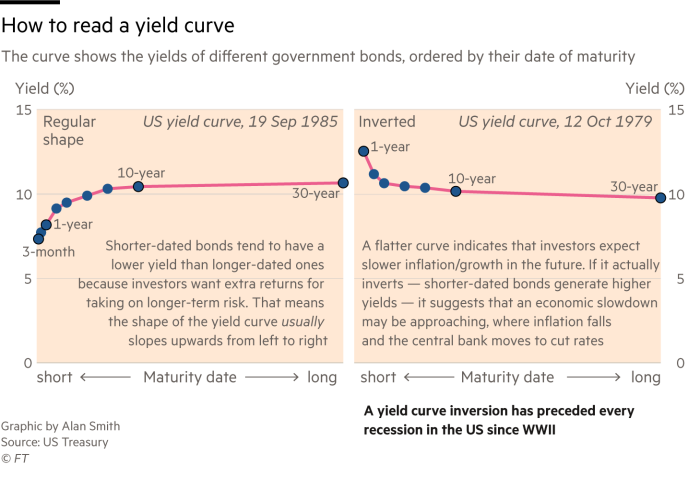

It always pays to be aware of a chart’s limitations, even useful ones. Take the iconic yield curve, a chart that shows the yields of government bonds of varying maturities. Analysts use the shape of the curve to gauge market expectations. It might even help predict recessions.

But with yields changing on a daily basis, showing how the curve evolves over time is not easy. That is because the conventional chart type for showing time series, a line chart, uses the horizontal axis to show time running from left to right. On the yield curve, that axis is occupied by each bond’s maturity date.

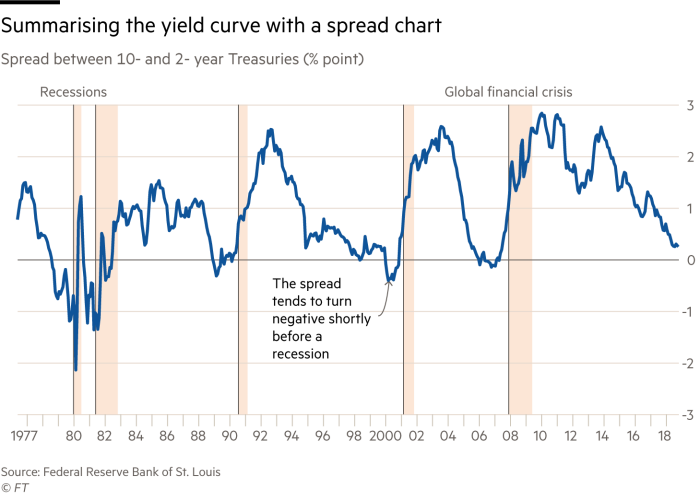

Instead, analysts often chart the “spread” — the percentage point difference between two bonds (typically 2-year and 10-year). This produces a useful summary of the cyclical peaks and troughs of market expectations and how they might relate to recessions, but it also throws away a lot of information from the underlying yield curve.

For example, we can no longer see what the actual yields are — have they been high or low? — and there is always the risk that the particular spread chosen might not be representative of the shape of the entire curve. And how much does the curve change on a daily basis anyway?

In 2015, Gregor Aisch and Amanda Cox of the New York Times addressed this problem by rendering the yield curve in 3D. It is a great interactive graphic that shows the yield curve in a new light.

Animation is another approach worth investigating — after all, using time itself to represent time seems logical. A rapid daily animation of the yield curve shows a month’s worth of data in half a second, a year in around six seconds. Tiny daily oscillations in the curve are now visible alongside more significant monthly and annual movement.

One problem with animation is that, with data changing so fast, it becomes difficult to remember key moments in the yield curve’s progression. Adding “memory lines” with text annotations of the curve’s “peak inversions” allows us to directly compare the yield curve at different points in time.

Extending the animation, to show how the yield curve has changed on a daily basis since 1979 to the present day, results in a video just over three minutes long. It is compelling, but over such a duration, almost eerily silent.

Of course, we could choose to add a Hans Rosling-style commentary — but could we use the data itself to produce a soundtrack?

The process of turning spreadsheets into sound is known as data sonification, the aural equivalent of data visualisation. And it might just be the next big thing in data presentation.

With our animation, the first challenge is to map the bond yields (the y-axis of the chart) to musical pitches.

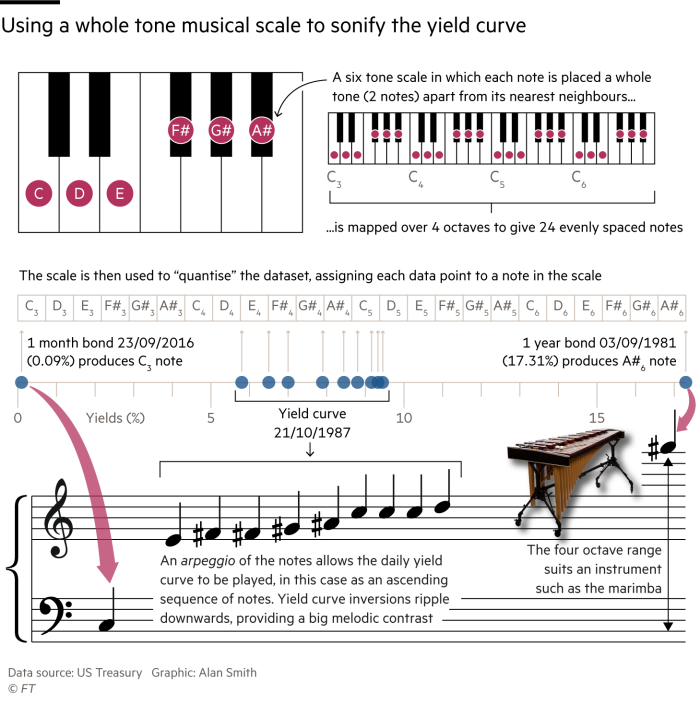

While it might be tempting to think in terms of orthodox musical scales (“recession in C minor”, “the great bear run in F# major”), our “music” ideally needs to preserve equal spacing between pitch in the same way as tick marks preserve distance on the chart’s y-axis. The more unusual whole tone scale — beloved of Debussy — ensures that our data will be proportionally spaced in pitch. The scale was also used last year by Berliner Morgenpost to sonify SDP party polling data.

Mapping a whole tone scale over four octaves gives 24 evenly-spaced notes with which we can sonify the yield curve data.

Next up is the challenge of how to arrange the notes. In musical terms, the yield curve visually resembles an arpeggio — a sequence of notes in which one is played after the other, rather than a chord, where all the notes are played simultaneously.

With the yield curve arpeggiating, regular, flat and inverted shapes are represented by ascending, flat and descending sequences of musical notes.

Playing each day of data as an arpeggio would require us to slow down the animation dramatically to identify individual notes. Instead, we can trigger an arpeggio sequence every 30 days while maintaining the same rapid rate of animation for the visual. The monotony of barely-changing daily tones is replaced by much greater melodic movement.

For orchestration, a marimba’s pitch range is perfect for our four octave scale — though one of the advantages of controlling sound in the digital domain through data is that it gives the option of unlimited remixes.

A bass drum synchronised to the month and vocal samples that “sing” the year allow us to track the changing yield curve over time. A repeating delay on the vocal sample repeats quarterly to indicate progress through the year. The relative level of yields (high/low), the shape of the yield curve (regular/flat/inverted), and the progress of time become encoded in sonic form.

Adding a sound effect to denote our “peak inversion” annotations provides a final join between the visual and aural worlds, completing our transformation of the yield curve.

Sonification has tremendous potential to not only support and reinforce visuals, but to take data to new audiences.

Chancey Fleet is assistive technology co-ordinator at New York’s Andrew Heiskell Braille and Talking Book Library. She has a particular interest in accessible technology and how it can bring data into the everyday life of those who, like herself, cannot directly see data visualisations.

Watching Ms Fleet listen to the yield curve and sketch its shape on her tactile drawing tablet provides a tantalising glimpse of what sonification might be able to do for blind or visually impaired users.

She immediately suggests an improvement: a “sonic legend” to provide a primer on interpreting the data. And while sensible audio defaults are a good starting point, sonification is something users should be able to personalise.

But, overall, she considers the experimental yield-curve music to be a promising approach that allows her to parse information from a complex time series of over 100,000 data points.

I sonified the yield curve by writing code and using specialist music software. But for this technique to truly take off into the everyday, it needs to be publicly accessible. A new open source tool funded by Google’s Digital News Initiative promises to do just that.

To coincide with New York Open Data 2019 week, NYC-based Datavized has released TwoTone, an interactive tool for making music with data. The company has already explored virtual and augmented reality, but according to co-founder Hugh McGrory, the first mass adoption of immersive data storytellingwill be in audio “because the barrier to entry is zero — everybody already has a pair of headphones”.

According to Mr McGrory, pre-release testing of TwoTone saw everything from “millennials chair dancing to music made from spreadsheets”, to intense interest from cyber security outfits keen to listen to data in real time.

Some might be inclined to dismiss sonification as a novelty, but a new generation of screenless devices with voice interfaces, such as Amazon’s Alexa, marks the end of silent interaction with computers. It is perhaps naive to think that data will continue to just be seen and not heard.

The tools behind the musical yield curve

Historical yield curve data are freely available from the US Treasury. The data animation was created using the open source data visualisation library D3.js, with WebMidi.js generating simultaneous MIDI (Musical Instrument Digital Interface) note messages. Apple’s Logic Pro X was used for sound generation.

Comments