Adam Selipsky: There will not be one generative AI model to rule them all

Simply sign up to the Technology sector myFT Digest -- delivered directly to your inbox.

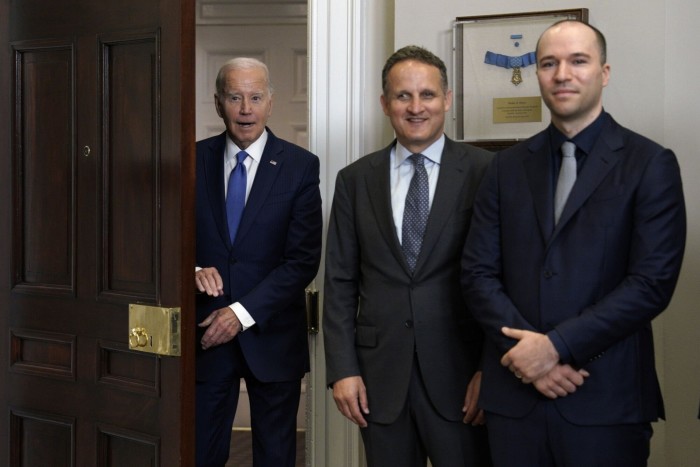

When Andy Jassy stepped up to replace Jeff Bezos as chief executive of Amazon in 2021, he lured back one of the ecommerce group’s former executives to succeed him as head of its cloud computing division, Amazon Web Services.

Adam Selipsky rejoined the company after five years away running Tableau, a data analysis and visualisation company. But he already had 11 years’ experience of working with Jassy at AWS — giving him vital knowledge of the business, and its culture, as it competes with Google and Microsoft to run artificial intelligence models in the cloud.

Since then, Amazon has announced a $100mn programme to connect customers and engineers focused on AI, and make AWS the go-to cloud provider for generative AI.

Here, Selipsky talks to the FT’s west coast editor, Richard Waters, about customer choice amid the most important technological advance since the internet.

Richard Waters: We’ve heard a lot about generative artificial intelligence this year — but we’ve heard more about it from Google and Microsoft than we have from Amazon. How important is this technology to you and to your customers?

Adam Selipsky: Generative AI is critical to Amazon, critical to Amazon retail customers, and critical to AWS customers. We think that generative AI is going to significantly impact — and, in many cases, reinvent — almost every application that any of us interact with, either in our personal lives or our professional lives.

Tech Exchange

The FT’s top reporters and commentators hold monthly conversations with the world’s most thought-provoking technology leaders, innovators and academics, to discuss the future of the digital world and the role of Big Tech companies in shaping it. The dialogues are in-depth and detailed, focusing on the way technology groups, consumers and authorities will interact to solve global problems and provide new services. Read them all here

Amazon is deeply invested in AI and machine learning and has been for a long time. If you back way up, Amazon’s been doing AI for 25 years: the personalisation on the Amazon website circa 1998 was AI. It was just that nobody called it AI at that time.

But we are taking a little bit of a different approach to other companies. We are squarely focused in AWS on what AWS customers need: on business and organisational applications. I think a couple of other companies have been building — or talking about — consumer-facing chat interfaces, which is fine. It’s super interesting and will absolutely have a use. But it’s not what a significant enterprise needs. We’re really focused on building what AWS customers need.

RW: So what are you building? What AI kind of platform are you building now?

AS: I’ll start at the bottom of the stack, the bottom of the three layers, and work up. So, at the bottom layer of the stack, there will be some companies who want to build their own models. And so you’ve got some start-ups who are doing that — folks like [the AI public benefit corporation] Anthropic.

Building and training these large language models is exceptionally compute intensive and, therefore, very expensive. People spend hundreds of millions of dollars. There’s talk about it costing billions of dollars to train models in the future, which may have trillions of parameters. I don’t think anybody really knows which models are going to be the “successful ones”.

Most folks assume that there will be a relatively small number of very large models which are powerful and effective and good at doing a lot of things. I think a lot of folks also believe that there will be smaller, built-to-fit models for specific use cases. You might have a smaller model which is tailored for helping developers to code, or built to discover new compounds for drugs.

I don’t think anybody really understands yet what the relative value is going to be of the larger, more general-purpose models versus the smaller, more targeted models, which will be less expensive to build and train — and maybe less effective, maybe more effective. We’re trying to set up for a world in which our customers want both types of models.

RW: Microsoft has been working with OpenAI for four years. With that amount of experience, and that head start, they have a real advantage in perfecting or optimising the platform for this kind of technology. Are you behind?

AS: No, I think that’s just flat out untrue. I’ve seen numbers recently published that suggested AWS is at least twice as large as [Microsoft’s cloud computing service] Azure. So our experience in running technology and systems at massive scale is unparalleled. We have far more customers across every industry, every use case, every country . . . So I think, just in terms of overall cloud capabilities, it’s really not even close. We have by far the broadest and deepest set of capabilities and of customers.

Then, specifically in machine learning, we released SageMaker in 2017. So we’ve had a machine learning platform since 2017, and have over 100,000 customers using it already.

We’ve even been building our own LLMs. And not all of our large competitors are choosing to build their own models. Some have just outsourced the models that they’re going to use to other companies, which is an interesting choice, but not the choice we’re making.

It’s interesting that somebody who’s not running their own models [like Microsoft, which has outsourced to OpenAI] would argue they’ve got such deep expertise in this area.

RW: I wanted to come on to that point: whether this is a technology that big companies like yours need to develop in-house, how core it will be in the long term. As you say, Microsoft has outsourced this work to OpenAI. What can you tell us about your own work on LLMs? How long have you been at it?

AS: Let’s move up to the second layer of the stack, and talk about having models in production that customers can use. We’ve released a service called Amazon Bedrock. It is a managed service for accessing many different foundation models for generative AI. A key concept here which others don’t seem to be embracing is that customers need choice in generative AI; customers need to be able to experiment.

We’re maybe three steps into a 10K race, and the question should not be, ‘Which runner is ahead three steps into the race?’, but ‘What does the course look like? What are the rules of the race going to be? Where are we trying to get to in this race?’

If you and I were sitting around in 1996 and one of us asked, ‘Who’s the internet company going to be?’, it would be a silly question. But that’s what you hear . . . ‘Who’s the winner going to be in this space?’

Generative AI is going to be a foundational set of technologies for years, maybe decades to come. And nobody knows if the winning technologies have even been invented yet or if the winning companies have even been formed yet. So what customers need is choice. They need to be able to experiment. There will not be one model to rule them all. That is a preposterous proposition.

A central element of our approach is choice for customers. So, to get to your question, Amazon is building its own models but we don’t think that’s the only answer for our customers. We’re very committed to providing choice. So we’re also providing access to Anthropic’s models. Anthropic is a leading start-up — you often hear about OpenAI and Anthropic in the same breath. Anthropic’s models are in there today.

The leading company for doing [AI] images — not language — is Stability AI. Stability’s models are in there. We also have other start-ups in there, like AI21. I’m confident we’ll add other models from other companies or open source over time.

Companies will figure out that, for this use case, this model’s best; for that use case, another model’s best . . . That choice is going to be incredibly important.

The second concept that’s critically important in this middle layer is security and privacy. AWS customers demand bulletproof security. They demand privacy. We never go to them with a database that’s not fully secure. We never go to them with a storage service which is not fully secure. And we would never go to them with a generative AI capability that’s not fully secure and private.

All of these models run in an isolated environment in your own virtual private cloud, what we call a VPC. The data never travels out over the internet. It’s always encrypted.

If you make any improvements to the model, that model’s running in your [private] environment. Those improvements aren’t going to go back to the mother ship so that your competitors get a better model to use.

A lot of the initial efforts out there launched without this concept of security and privacy. As a result, I’ve talked to at least ten Fortune 1000 CIOs who have banned ChatGPT from their enterprises because they’re so scared about their company data going out over the internet and becoming public — or improving the models of their competitors.

Now, of course, some of these companies are circling back and saying, “Hey, we’re going to produce secure versions of this.” But it’s not only about the capabilities. It’s about the attitude and the philosophy around security. Our enterprise customers demand that everything we do be secure, not just in version two.

RW: Would you like to offer open OpenAI’s models on your platform, to your customers? Not the application ChatGPT, but GPT-4 as a foundation model? Is that something you’d like to offer?

AS: Well, we’re committed to offering choice to our customers. So we’re open to discussions [about] any models that are going to be useful for customers, where there’s a good fit. So I would say yes to any model that our customers would find interesting.

RW: But it seems OpenAI is going to be exclusive to Microsoft for a while, so maybe . . .

AS: I don’t know the answer to that question. I don’t know.

RW: You make the point that people want different models — they want to be able to differentiate. So how are you going to be differentiating Titan? Why would anyone use Titan? What will it do that other models don’t do?

AS: One thing that is important about Amazon’s effort in building and training our models is that we’re taking extreme care on issues of responsible AI.

I suggest that all customers be very conscious of what data their model providers are using to train the models. Amazon is being extremely careful to make sure that any data we use to train the models is appropriate. I’ve seen various articles in the press asking whether folks have rights to the data they’ve used to train the models. We’re making sure we take extreme care on issues of intellectual property.

In addition, there’s been a lot of talk about “hallucinations” in models — models making up answers to questions. It’s a very important problem to solve. In our own Titan models, we are employing new techniques and technologies to minimise the possibility that the model would make something up and represent it as fact, and also to build checks into the model so the model would know if it’s made something up.

RW: On LLMs, will there be one model to rule them all? I remember, in the early days of search engines, when there was a prediction we’d get many specialised search engines . . . for different purposes, but it ended up that one search engine ruled them all. When you talk to OpenAI and companies like that, they have a similar view that a larger model can also take on specialised roles. So, might we end up with two or three big models?

AS: Do you know who the leading search engine was in 1997?

RW: That’s a good question. I guess Yahoo . . .

AS: AltaVista.

RW: Oh, AltaVista. Yeah!

AS: Now, my children have never heard of AltaVista. So, even if you believed there was going to be one model to rule them all, that model may or may not have been created yet. That just buttresses the case that we need choice.

Look at databases, there’s not one database to rule them all. There are many powerful database offerings out there. Look at online shopping, online commerce. As passionate as I am about Amazon, we have robust competition in every segment that we operate in, and customers have lots of choices in online commerce.

The most likely scenario — given that there are thousands or maybe tens of thousands of different applications and use cases for generative AI — is that there will be multiple winners. Again, if you think of the internet, there’s not one winner in the internet.

Lots of folks have said that generative AI is perhaps the most important technological advance in this era since the internet. If you go down that road, then you ask yourself: “Was there one winner in the internet?” And the answer is usually no.

The most reasonable hypothesis is that there would not be a single winner here. There’d be multiple use cases across a myriad of customers requiring more than one solution.

RW: But there’s a concern that a small number of tech companies are going to dominate this technology, not just because there’s a natural monopoly in LLMs, but because you have the cloud platforms. We already know there aren’t many competitive cloud platforms. This is hugely expensive stuff to develop and create. So might we end up with three or four big tech companies dominating this area?

AS: What’s shaping up is very robust competition, which is appropriate, and the way it should be. It’s quite encouraging how much competition is shaping up. You’ve got multiple cloud providers who will offer generative AI services, and we’re all going to compete vigorously against each other — just as we have for years. There’s good competition there.

Then, if you look at the actual models themselves, many of the leading models are being produced by start-ups today — whether it’s Anthropic, OpenAI, Stability AI, RunwayML in the video space, AI21, not to mention all these open-source models which lots of folks are looking at: [Meta’s] LLaMA, and so forth.

If you just look at the array of models that I just mentioned, that does not at all look like a couple of big established companies acting in any way anti-competitively.

RW: We’ve seen the FTC write to OpenAI saying there are a whole bunch of questions here about data privacy, accuracy, misinformation. As a user, I’m sure we all applaud this — because it’s great to see someone really kicking the tyres on these models. But do you think that your customers are going to go slower on this technology?

AS: I think our customers are going to move very quickly to adopt generative AI. They see the potential gains as being enormous, but what they need is a trusted partner that will go on this journey with them in a really responsible manner. For our models, we’re committed to being extremely careful and appropriate about the data we use to train them.

We also believe in transparency. For all models in Amazon Bedrock, there’s going to be something called “service cards”, which is essentially information we’ll post online about the types of data that have been used to train the model, who’s done that, and types of use cases, et cetera.

We also talked about security and privacy. Those are big issues. Some customers have slowed down on their initial AI explorations because of their security and privacy concerns. But, now, we see many customers accelerating their investigations and pilots with us, because they’re very comfortable with the security and privacy model that we’re providing them.

That gets us to our third layer of the model, Richard: we have the chips; we have Bedrock, which is access to the models; then we and our partners and customers will build thousands of applications on top of these models.

Let me give you one that we’re delivering: a coding companion, called CodeWhisperer. It’s being very broadly adopted by developers. You type in words, and it gives you back code. And, in the internal coding challenges that we did, developers were completing their tasks up to 57 per cent faster compared to not using CodeWhisperer.

It’s going to be a really important tool for a great many companies, [enabling] increased productivity for their developers as well as a better experience for their developers — because CodeWhisperer will essentially take away a lot of the heavy lifting [and] let them focus on the really difficult parts and the inventive parts.

RW: We are seeing these incredible productivity benefits [but] what we don’t know yet is the quality of the code — whether it reduces the number of coders, or makes them more productive. Do you think it could impact work?

AS: I will give you an analogy. In the earlier years of AWS, IT groups would ask us, “Are IT jobs going to go away? Are IT departments going to go away? Is the cloud going to render IT obsolete?” Our answer was a big, emphatic “no”.

What it’s done for the most part is allow IT departments to be more productive, and it has improved their lives by allowing them to do more interesting work. So, instead of crawling around data centres at three in the morning trying to figure out if cables have become unplugged, IT departments can design a data strategy for their company.

They can focus on enterprise security and configuring environments with multiple devices that meet their organisation’s security requirements, and other really important, really value-added tasks. What the cloud has really done is: one, taken away a lot of heavy lifting from them; two, provided a really secure, highly reliable environment; and three, enabled both IT departments and lines of business to be more agile and innovate better than before.

I’m sure there are some companies who have gained efficiencies in the amount of staff that they’ve chosen to deploy in IT. But, for the most part, it’s really just made IT more important to the business and able to deliver more to their business partners.

I suspect that we’ll see the same thing play out in the coding companion space — where we certainly don’t see developers going away. Amazon continues to hire a lot of developers. I think this will make our developers more productive and will make developers’ lives better; make their jobs more interesting.

RW: On this question of productivity, how transferable is the experience of coding to other work? It feels like we’re at the very early stage of building applications built on generative AI, so it is impossible to tell how many jobs will be beneficially affected and how many won’t. What can you tell, so far, from what you are seeing and hearing?

AS: We are seeing the potential for virtually every application that you and I interact with to be deeply impacted by generative AI. That will change a lot of processes and mechanisms inside companies. It will change a lot of jobs. I believe that, in the vast majority of cases, that’ll be for the better.

Take the customer service example. It’s very easy to see that generative AI will be able to handle a great number of standard questions that come into a customer service department. That’ll mean customer service agents are actually able to focus on the most important, most difficult types of customer issues instead of answering the same basic questions over and over again.

I think most people would find they have higher job satisfaction in such an environment. Also, you’re going to have higher customer satisfaction because you’re going to really, really train your agents to get better and better at answering more difficult issues.

But this is not going to happen overnight. The models have to get much better than they’ve been.

RW: When the internet came along, there were a lot of predictions about the overall impact on productivity, and it never showed through. So could that happen again, with AI?

AS: The internet has fundamentally changed everything. Look at us just looking up statistics that you used to have to find in an encyclopedia. The internet has fundamentally transformed innumerable experiences and applications both in professional environments, as well as in our personal lives. And we think that generative AI has similar potential.

Comments