The impact of AI on business and society

Simply sign up to the Artificial intelligence myFT Digest -- delivered directly to your inbox.

Artificial intelligence, or AI, has long been the object of excitement and fear.

In July, the Financial Times Future Forum think-tank convened a panel of experts to discuss the realities of AI — what it can and cannot do, and what it may mean for the future.

Entitled “The Impact of Artificial Intelligence on Business and Society”, the event, hosted by John Thornhill, the innovation editor of the FT, featured Kriti Sharma, founder of AI for Good UK, Michael Wooldridge, professor of computer sciences at Oxford university, and Vivienne Ming, co-founder of Socos Labs.

For the purposes of the discussion, AI was defined as “any machine that does things a brain can do”. Intelligent machines under that definition still have many limitations: we are a long way from the sophisticated cyborgs depicted in the Terminator films.

Such machines are not yet self-aware and they cannot understand context, especially in language. Operationally, too, they are limited by the historical data from which they learn, and restricted to functioning within set parameters.

Rose Luckin, professor at University College London Knowledge Lab and author of Machine Learning and Human Intelligence, points out that AlphaGo, the computer that beat a professional (human) player of Go, the board game, cannot diagnose cancer or drive a car. A surgeon might be able to do all of those things.

Intelligent machines are, therefore, unlikely to unseat humans in the near future but they will come into their own as a valuable tool. Because of developments in neural technology and data collection, as well as increased computing power, AI will augment and streamline many human activities.

It will take over repetitive manufacturing processes and perform routine tasks involving language and pattern recognition, as well as assist in medical diagnoses and treatment. Used properly, intelligent machines can improve outcomes for products and services.

To stay ahead of the competition, companies must think creatively about how to incorporate AI into their strategy. This report looks at areas where AI can be deployed, some of the issues that may arise and what we should expect to see.

Dealing with data

Adoption of AI has been particularly widespread in the financial services sector. Forrester, the research group, notes that about two-thirds of finance firms have implemented or are adding AI in areas from customer insights to IT efficiencies. Data analysis already detects fraud.

Jamie Dimon, chief executive of JPMorgan, noted in 2018 that as well as having the potential to provide about $150m of benefits each year, machine-learning systems allowed for the approval of 1m “good” customers who might otherwise have been declined, while an equal number of fraudulent applications were turned down.

AI is also useful in stock market analysis. Schroders, the fund manager, says such systems are basically “sophisticated pattern-recognition methods” yet they can nevertheless add value and improve productivity.

Schroders uses AI in tools that forecast the performance of companies after initial public offerings, monitor directors’ trades and analyse the language in transcripts of meetings.

Like many other businesses, the company also employs AI to automate low-judgment, repetitive back-office processes.

Interestingly Schroders believes we may already be at “peak AI” since the technology is “difficult to implement in a meaningful way for many of the high-complexity tasks that a typical knowledge worker does as part of their job”.

Professor Richard Susskind, author of Online Courts and the Future of Justice and technology adviser to the Lord Chief Justice of England and Wales, observes that “professionals invariably see much greater scope for the use of AI in professions other than their own”.

Elsewhere in professional services, law firms have applied language recognition to assess contracts, streamline redaction and sift materials for review in litigation cases, as well as to analyse judgments. The London firm Clifford Chance notes, however, that the facilitation of processes does not yet “transform the legal approach”.

Prof Susskind says: “I am in no doubt that much of the work of today’s lawyers will be taken on by tomorrow’s machines.” This could have major implications for how lawyers are trained and recruited.

Healthcare is another sector to benefit from AI’s rapid development.

Applied to large data sets, AI has identified new drug solutions, enabled the selection of candidates for clinical trials and monitored patients with specific conditions. Roche, for example, uses deep-learning algorithms to gain insights into Parkinson’s disease.

In the consumer sector, data and language analysis has been applied to develop translation apps, online moderation and product and content marketing. It has also identified epidemic outbreaks and verified academic papers.

In energy, Iberdrola, the Spanish multinational, has achieved efficiency gains that benefit both the company and the environment. It uses AI to improve the operation and maintenance of its assets through data analytics. Systems developed with machine learning co-ordinate the planning and delivery of maintenance, monitor electricity usage and optimise distribution.

Set against these advances, it should be acknowledged that AI has also worked in less benign ways: it has given criminals the means to commit sophisticated fraud and assisted in the creation and dissemination of “fake news”.

Sound recognition and analysis

Chatbots — software that can simulate conversation — have become the mainstay of many customer service centres and are used to answer questions on topics ranging from product options for online marketplaces to telephone inquiries at utilities and banks.

These digital assistants vary in sophistication and are limited by their command of what is known as “natural language processing”: the ability to treat words as more than mere inputs and outputs. This makes empathetic responses difficult to simulate, while the inability to comprehend context means that AI cannot distinguish a joke from a slur. Advances in this area could be transformational to the range of possible applications, as well as to acceptance by consumers.

Elsewhere AI developed by Huawei has been deployed by Rainforest Connection to fight illegal logging and poaching.

Dealing with images

Facial recognition is perhaps the best-known use of image analysis. From its application in identity verification to unlock mobile phones to its more sinister deployment by “surveillance states” — in Xinjiang province in China, for instance — its adoption is increasingly widespread.

There remain significant drawbacks to the technology, not least its unreliability in identifying the faces of people of colour — just one of the many ethical problems connected to the use of AI.

Less controversially, image analysis is being used in the medical industry. It can help in the identification and diagnosis of diseases such as cancer and its performance in eye scans is at least as accurate as that of human specialists.

In 2018 the US Food and Drug Administration approved a retinal scan algorithm designed by IDx, an Iowa start-up, that can diagnose diabetic retinopathy without the need for an eyecare specialist. The implications for healthcare could be far-reaching, both in terms of changes in the skills needed as well as improved access to care.

Image recognition has also been put to use in environmental conservation. A platform called Ewa Guard, jointly developed by Lenovo and Bytelake, remotely counts trees and monitors the health of forests. Lenovo, which is based in Beijing, has joined North Carolina State University in the US to apply deep-learning algorithms to identify farmland and monitor soil and crops to optimise water management.

A further possible application is in waste management, where image identification may assist robots to extract recyclable items based on logo or component recognition.

Personalisation

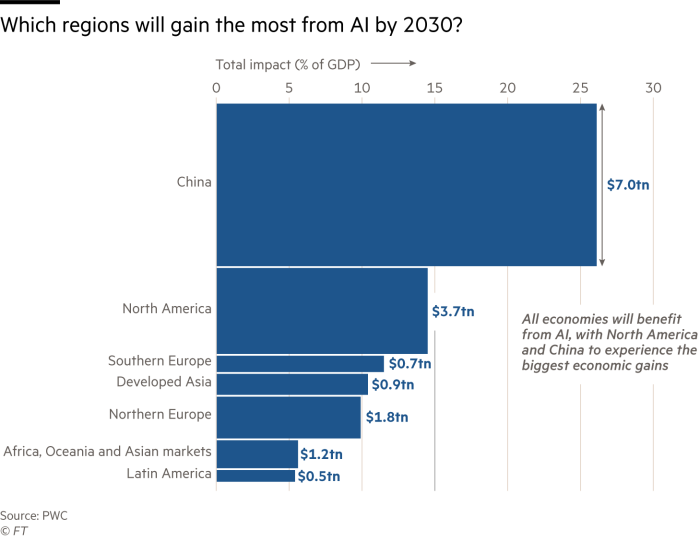

Personalisation of products and marketing is an area of rapid development which could greatly benefit manufacturers and retailers. A 2018 report from PwC, the Big Four accounting firm, estimated that the value derived from the effect of AI on consumer behaviour, for instance through product personalisation and an increase in free time, could be as much as $9.1tn by 2030.

Among the sophisticated algorithms to personalise internet content is that used by TikTok, the app that allows users to upload short videos. Byte Dance, TikTok’s owner, revealed in June that its system is based on user interactions, video information and to a lesser extent, device and account settings.

Cosmetics, too, can be personalised by data analysis. Companies such as Kao, a beauty group, use genetic data to tackle wrinkles and dermatological conditions.

Meanwhile the redesign of carmaking processes by Mercedes — converting “dumb” robots on its production line into human-operated, AI-assisted “cobots” — has enabled a previously impossible level of customisation, such that “no two cars coming off the production line are the same”, according to a report in Harvard Business Review.

So much for the way AI is being deployed in businesses around the world. What are the implications of its widespread adoption?

Businesses

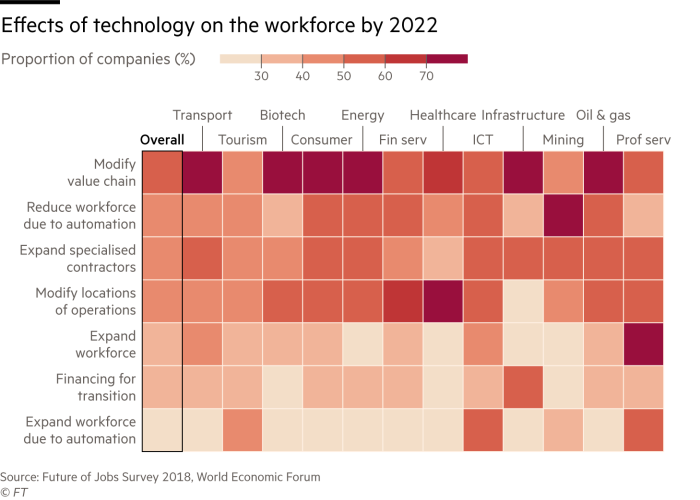

For a business to adopt AI with any degree of success it must have a coherent and active strategy. Equally critical is that the strategy is controlled centrally rather than executed piecemeal: businesses need to consider the use of AI holistically, so that entire processes are reimagined, along with the redesign of tasks to blend machine and employee skills.

FT panellist Ms Ming cited an example in which her company came up with a tool to eradicate inefficiencies in manufacturing processes. While the technology did what was needed, “the companies were not ready to act” as their entire workflows would have to change.

This perhaps offers an advantage to companies that operate without the burden of legacy processes, but incremental change is still better than none. Research by Automation Anywhere and Goldsmiths, University of London found that “[AI] augmented companies enjoy 28 per cent better performance levels compared with competitors”.

Buy-in from employees is also essential and can be made easier by including the workforce in the process of redesigning their roles. Lenovo suggests that in future “as teams become more experienced, part of their training will be focused . . . in identifying which parts of their work are suitable to deploy AI towards”. Communication and transparency with employees is critical to engendering trust in the adoption of AI.

IT systems, too, are likely to need a radical overhaul to function in an AI world, and those built from scratch will be more effective than bolt-ons to existing software. Although the cost may be daunting, Clifford Chance argues that the marginal cost of AI systems is relatively low once they are built and offset by the fact that AI can help to “significantly reduce the cost of providing legal services”.

As well as establishing ownership of AI strategy at board level, companies will also need to consider how to deal with the ethical challenges the technology brings. Coupled with the focus on environmental, social and governance (ESG) goals encouraged by the Covid-19 crisis, is a need for more formalised ethics oversight on boards to ensure that AI implementation conforms with corporate values. Could chief ethics officer be the next boardroom position?

Businesses will have to consider the risk of deploying AI from multiple perspectives, including the legal, regulatory and ethical.

In a global survey of 200 board members, Clifford Chance found that “88 per cent agreed (somewhat or strongly) that their board fully understands the legal, regulatory and ethical implications of their AI use”, but that “only 36 per cent of the same board members said they had taken preliminary steps to address the risks posed by lack of oversight for AI use”.

Employment

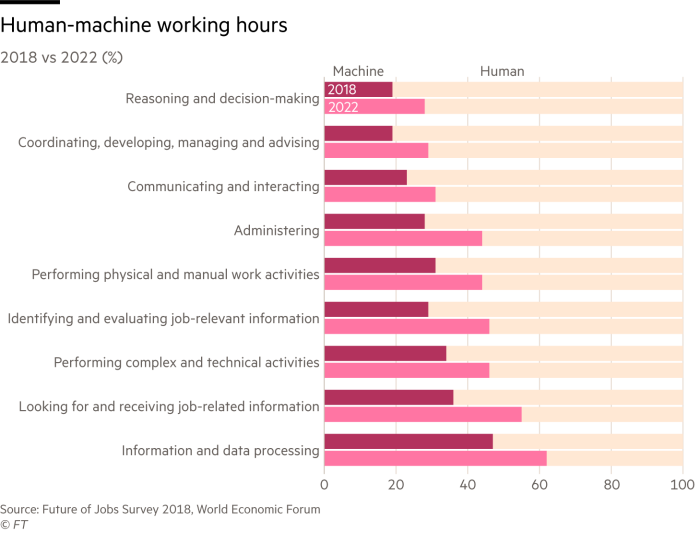

We are all familiar with blood-curdling predictions that AI could “steal our jobs”. The consensus among researchers, however, is that rather than put humans out of work, the adoption of AI is more likely to change both the nature of the jobs we do and how we carry them out.

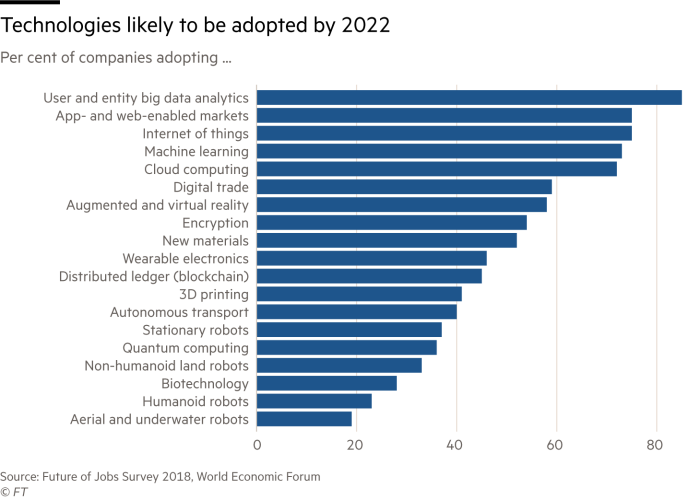

In its Future of Jobs Report 2018 the World Economic Forum cited one set of estimates indicating that while 75m jobs may be displaced, 133m could be created to adapt to “the new division of labour between humans, machines and algorithms”.

Carl Frey, author of The Technology Trap and director of the Future of Work programme at Oxford Martin School, estimated in 2013 that 47 per cent of US jobs (based on occupation classifications) were at risk of automation, while UK categorisations gave a figure of 35 per cent.

These numbers have been widely debated but Mr Frey observes that they account for those jobs that can be restructured in order to be automated — and individuals can be allocated new tasks as long as they acquire fresh skills.

While occupations involving, say, the ability to navigate social relations are to a large extent secure, Mr Frey points out that this is true mainly for more complex interactions. For example, fast-food outlets, where interaction is not integral to the appeal of a product, use more automation technology than fine-dining restaurants.

As businesses’ reliance on AI increases, it is clear that a redistribution of labour is inevitable. To deal with the shift in skills that this implies, retraining the workforce is critical. The WEF notes that on average about half of the workforce across all sectors will require some retraining to accommodate changes in working patterns brought about by AI.

Prof Luckin points out that businesses have a huge amount of data on their staff that could be invaluable to understanding how to optimise redeployment. “The savvy businesses will be really trying to understand their current workforce and what workforce they need, and looking to see how they can retrain on that basis.”

Much of that education is likely to go to the higher-skilled segment of the workforce and “saving people” if not “saving jobs” will have to be considered. In the first instance, the burden may fall to governments but the threat to low-skilled workers could require businesses to pick up the slack, especially given the additional pressures caused by Covid-19.

So far it appears that the pandemic has accelerated the trend towards automation. The effect is being felt in call centres, part of an outsourcing services industry worth nearly $25bn to the Philippines in 2018. Even before the pandemic, the IT and Business Process Association of the Philippines noted that the increase in headcount in 2017 and 2018 had been just 3.5 per cent, against a forecast of nearly 9 per cent. One of the reasons for this is increased automation.

Call centre operators in countries such as the Philippines and India have suffered further from the requirement to work from home during the pandemic. They have been hampered by poor infrastructure, which ranges from a lack of IT equipment or fast internet to security considerations when dealing with customers’ financial information.

At the end of April, US-based outsourcer [24]7.ai said demand for some automated products had risen by half since the beginning of the year, well ahead of the call for human services.

Food preparation roles may also be at increasing risk of redundancy because of automation spurred by Covid-19, according to the European Centre for the Development of Vocational Training. The advent of robots such as Flippy, which can cook burgers and french fries and knows when to clean its own tools, shows that such a shift is not out of the question.

One domain in which AI has failed to encroach successfully, says Mr Frey, is the arts: creative output that is original and makes sense to people has not yet been successfully replicated, even if an algorithm could be programmed to produce something that sounds similar to Mozart.

“The reason is simply that artists don’t just draw upon pre-existing works, they draw upon experiences from all walks of life — maybe even a dream — and a lot of our experiences are always going to be outside of the training dataset.”

Mr Frey’s point is echoed by Prof Wooldridge, who said people will have to wait a long time for works created by AI that would “deeply engage” them.

Education

AI affects education many ways. People will need to be taught what AI is and how to use it, as well as the way its inputs and outputs are conceived. Education is also crucial to establishing public trust.

This summer’s school exam-marking controversy in the UK shows what happens when trust in computer-generated results is eroded. An automated system designed to mark A-levels in line with previous years led to a public outcry. A lack of transparency as to how the algorithms used would work, combined with a lack of confidence in the metrics used, undermined the exercise.

Prof Luckin stresses that if public consent and trust are to be gained, then AI-driven processes should be both transparent and easily explained.

Data literacy will be hugely important, says Prof Luckin, to ensure that people are equipped to assess and refine AI output.

“That’s the real problem. It was an algorithm and they took the human out of the loop. It needed much more human intervention with the data. It is just having someone who is contextually aware going ‘hang on a minute, that’s not going to work’.”

Finally, AI can also be used as a pedagogical tool, complementing the work of human teachers. It can assess our ability to learn and advise us on the best way to retain information. For example, Up Learn, a UK company, offers learning “powered by AI and neuroscience” and promises a refund in the event that customers do not achieve a top grade.

Ethics and bias

The widespread adoption of AI obviously raises ethical challenges, but numerous organisations have sprung up to monitor and advise on best practice. These include AI for Good, the AI Now Foundation and Partnership on AI.

Governments are also taking steps, with more than 40 countries adopting the OECD Principles on Artificial Intelligence in May 2019 as a “global reference point for trustworthy AI”. At about the same time, China released its Beijing AI Principles. In July, the European Commission published the results of its white paper consultation canvassing views on regulation and policy.

Despite this there is no globally agreed set of standards: regulation remains piecemeal.

The British A-level controversy drew attention to the problem of historical bias, showing how AI is dependent on data and programming inputs.

Diversity is another problem, both in terms of the poor representation of women among AI professionals but also in how AI is developed. Facial recognition, for instance, works best on white male faces, a “technical problem” for which, Ms Ming noted, there is limited incentive to fix in the absence of regulatory enforcement.

On the other hand, AI can help to promote diversity through “colour-blind” recruitment processes. Schroders, for example, uses AI tools when it looks for early-career trainees and graduates. “Given that the alternative is people looking at candidates’ CVs (with ample scope to favour candidates like themselves),” the company says, “this can be much more fair.”

Facial recognition technology raises further ethical concerns in relation to surveillance — for instance, of the Uighur population in China.

Abuse of data harvested through facial recognition is not restricted to the state, however. Identity fraud and data privacy are significant problems.

In July, UK and Australian regulators announced a joint investigation of Clearview AI, the facial recognition company whose image-scraping tool has been used by police forces around the world.

Other ethical problems loom. Gartner says that by 2022 one-tenth of personal devices will have “emotion AI” capabilities, allowing them to recognise and respond to human emotions, which will present opportunities for manipulative marketing. Accenture advises that the groundwork for the ethically responsible use of such technology needs to be laid now.

What does the future hold?

Businesses and employees alike need to be prepared for what is likely to be widespread and sometimes bewildering change as a result of AI adoption, and the ethical and regulatory challenges that will come with it.

“Doubters find it hard to grasp that the pace of technological change is accelerating, not slowing down,” says Prof Susskind.

“There is no apparent finishing line. Machines will outperform us not by copying us but by harnessing the combination of colossal quantities of data, massive processing power and remarkable algorithms.”

This article is part of the FT Future Forum — an authoritative space for businesses to share ideas, build relationships and develop solutions to future challenges.

Comments